Hallo & Hello liebe Senfcall-using-People,

[technical part in english below]

herzlichen Dank an alle, die am Donnerstag (15.4.) bei unserer Jubiläumsfeier a.k.a. dem Lastfest dabei waren. Wir haben die Betaversion von BigBlueButton 2.3 (beta-3) getestet und dabei zusammen einige Probleme und Verbesserungen gefunden. Gefreut hat uns insbesondere das große Interesse während der anschließenden Fragerunde. Im Folgenden möchten wir einige Details zu den technischen Erkenntnissen aufführen und da diese auch für andere BigBlueButton-Hoster interessant sind, wechseln wir nun auf Englisch.

[English part]

The server we used for stress testing is a Hetzner AX101 running Ubuntu Bionic and BigBlueButton 2.3 beta-3 (which has since been succeeded by a beta-4). The server has previously been used for several weeks in our production infrastructure (running BigBlueButton 2.2 on Ubuntu Xenial). For the stress test we reinstalled it from scratch and configured it using the following configuration:

We used this commit from infra.run's Ansible role as a basis.

- We modified the configuration to use the maximum supported amount of parallel meteor nodejs processes in /etc/bigbluebutton/bbb-html5-with-roles.conf, since this process has been the major performance bottleneck for us in BigBlueButton 2.2.

- We used a setup with three Kurento instances, which - at the time - was not supported by the ansible role. We therefore used a configuration created by Daniel Schreiber that utilizes a templated systemd unit in combination with a systemd target that requires all three Kurento instances. The unit file and configuration can be viewed here.

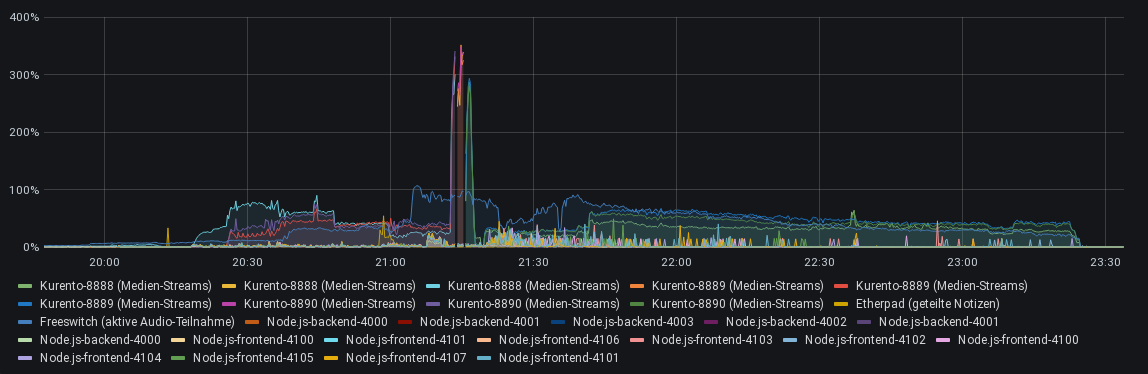

During the stress test, we monitored the server using our Grafana/Prometheus setup. Below, you can see the statistics produced by our prometheus exporter using this config.

This is the timeline of our test:

-

20:15 We slowly let people in using the waiting room, as to not cause an overload situation in the beginning. All users had to join to Kurento's audio, because we disabled microphones for non-moderators at this point. A few moderator webcams were active. We gave a short introduction to the event and some info about our project and BigBlueButton, since many non-technical people were in the audience. We prepared users that they might lose the connection during the test and asked them to re-join a little while later in case they disconnect. Slightly more than 100 users participated in the stress test. This was constant throughout (save for situations where people lost their connection and rejoined) the complete session.

-

20:58 Etherpad (shared notes) - We asked users to spam the shared notes.

-

21:04 Freeswitch/speech - We gave users permission to use the microphone and asked them to all speak or make noise at the same time. This also means they disconnected from Kurento's audio and connected to Freeswitch.

-

21:07 chat - We asked users to spam the chat.

-

21:12 webcam - We asked all users to enable their webcams and not move. We did plan a second test where we would have asked users to move around in order to produce more traffic, however we did not do that since the first test gave catastrophic results already.

-

21:18 whiteboard - We enabled the whiteboard and asked users to draw on it.

-

21:22 mass reload - The previous test overloaded most/all clients (see below), forcing people to reload the page.

In the end, we tried using breakout rooms, but that did not work due to an error in the Ansible setup that has since been fixed. We unfortunately forgot testing this beforehand. Sorry!

What went well?

-

Chat was stable and performant in the load scenario. This is an improvement over 2.2.

-

Etherpad was laggy during the (completely unrealistic) load, but recovered well and seemed to employ some rate limiting.

-

Freeswitch was stable and handled the maximum load well without audio artifacts. The high CPU usage is expected and probably necessary, since it mixes every participant's stream (containing everyone else's audio, but not their own).

-

The Node.js/Meteor is no longer a performance bottleneck, reaching a maximum of 50% CPU usage for a single measurement and staying in single-digits or even below 1% most of the time. In our eyes, this is the most significant improvement over BigBlueButton 2.2 and will likely result in cheaper operational costs for us and other hosters, as server hardware can be used more efficiently.

What was doing badly?

-

Kurento-based audio and video was overwhelmed by the load. Users frequently reported issues connecting to the listen-only audio, and we had to abort the webcam test. At multiple points during that test, all users were disconnected from Kurento.

The logs suggest that Kurento crashed because it could not create more threads.

112310:2021-04-15T21:15:25,195438 28661 0x00007f49b19f0700 error glib GLib:0 () creating thread 'KmsLoop': Error creating thread: Resource temporarily unavailable

As it is running as a systemd service, systemd's resource constraints apply. Systemd calculates the DefaultTasksMax property depending on the kernel's max. PID which defaults to 32768, and respectively 4915 for DefaultTasksMax as it is described in systemd's man page. From a look at Kurento's implementation it seems that it is spawning a new thread for each sending and receiving video stream which in turn equals approximately the square of the users in the session. This means that in our load test, if 71 participants tried to activate their video feed simultaneously the 4915 tasks were exhausted which seems plausible.

The pid_max setting can be adjusted using sysctl up to 222:

sysctl kernel.pid_max=4194304

This results in a DefaultTasksMax of 18879 which can then be further increased in /etc/systemd/system.conf. It is necessary to test this again with these improved settings.

Additionally, connecting to Kurento-based services often took very long, suggesting there may be some kind of bottleneck inside Kurento when multiple users establish connections at the same time. This problem also occurs in BigBlueButton 2.2.

- The presenter's UI went blank (dark-blue background) while observing incoming poll results with many participants. They had to refresh the page, losing the poll results in the process. This was reproducible with increasingly lower numbers of participants. During the first attempt, it crashed at 96 poll responses. This problem needs further investigation, since the server log files are of no help.

- The collaborative whiteboard drawing did not work. Users would see their own drawings in a slightly lighter color that usually indicates that the drawing is not yet complete. Users would not see everyone else's drawings until they refreshed the page. Since we had >100 people drawing excessively, this resulted in a huge lag when reloading the page, because everyone's drawings needed to be rendered initially. One moderator finally managed to erase the drawing and restrict whiteboard access again by switching from Firefox to Chromium, which apparently is slightly more resistant against this kind of extreme load. Afterwards, the meeting was usable again.

We are happy about the improvements over previous versions that BigBlueButton 2.3 offers. The absence of the Node.js bottleneck has the potential to drastically change the way this software is deployed, improving the economic and ecological footprint of BigBlueButton due to more efficient use of the server's hardware. There are also some improvements in BigBlueButton 2.3 such as the recording player, that are not relevant for Senfcall, since we disable the recording feature on our service. Last but not least, we hope the final 2.3 version will be released soon, as to not depend on Ubuntu Xenial past its end-of-life this month. To this end, we will continue working with the upstream and broader community to fix the issues that we observed, especially in regard to regressions over BigBlueButton 2.2.

We would like to thank:

-

Daniel Molkentin (infra.run) and Daniel Schreiber (TU Chemnitz) for their work on configuration management for BigBlueButton 2.3 using Ansible and Chef,

-

basisbit for coming up with the initial stress test concept last year,

-

the BigBlueButton developers for their open source video conferencing system,

-

our users, who made this possible with their participation and donations.